Callbreak Multiplayer

Real-time multiplayer card game

Overview

Callbreak Multiplayer was the first product where I had to think like a founder and an engineer at the same time. I co-founded Khellabs and built the game from zero to more than 1,000,000 downloads on Google Play.

The visible product was a card game. The actual engineering problem was building a low-latency multiplayer system that stayed affordable, resilient to bad mobile networks, and fun enough to retain players over time.

What I built

- Real-time multiplayer game flow and state synchronization

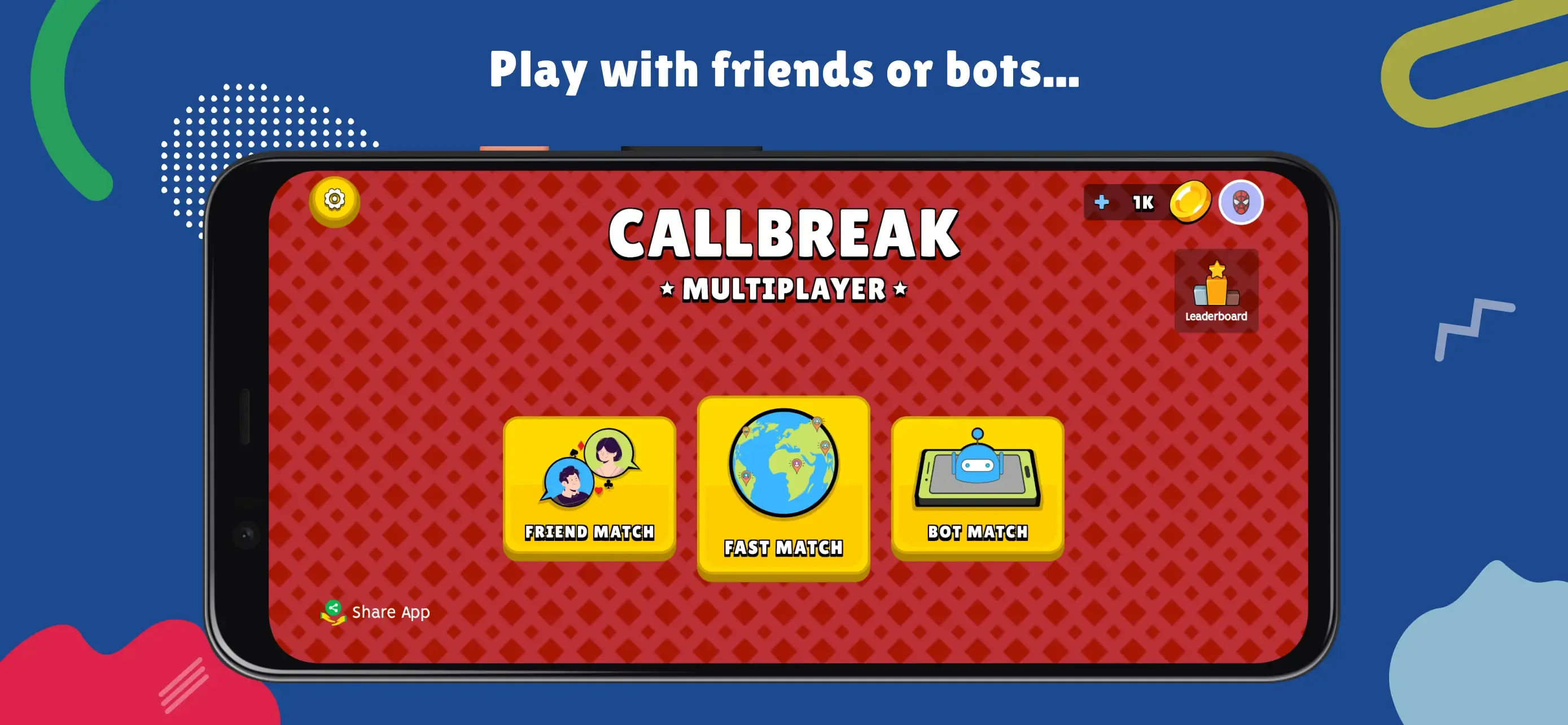

- Matchmaking and private room logic

- Rejoin flows for unreliable mobile connections

- Firebase-backed infrastructure with cost controls

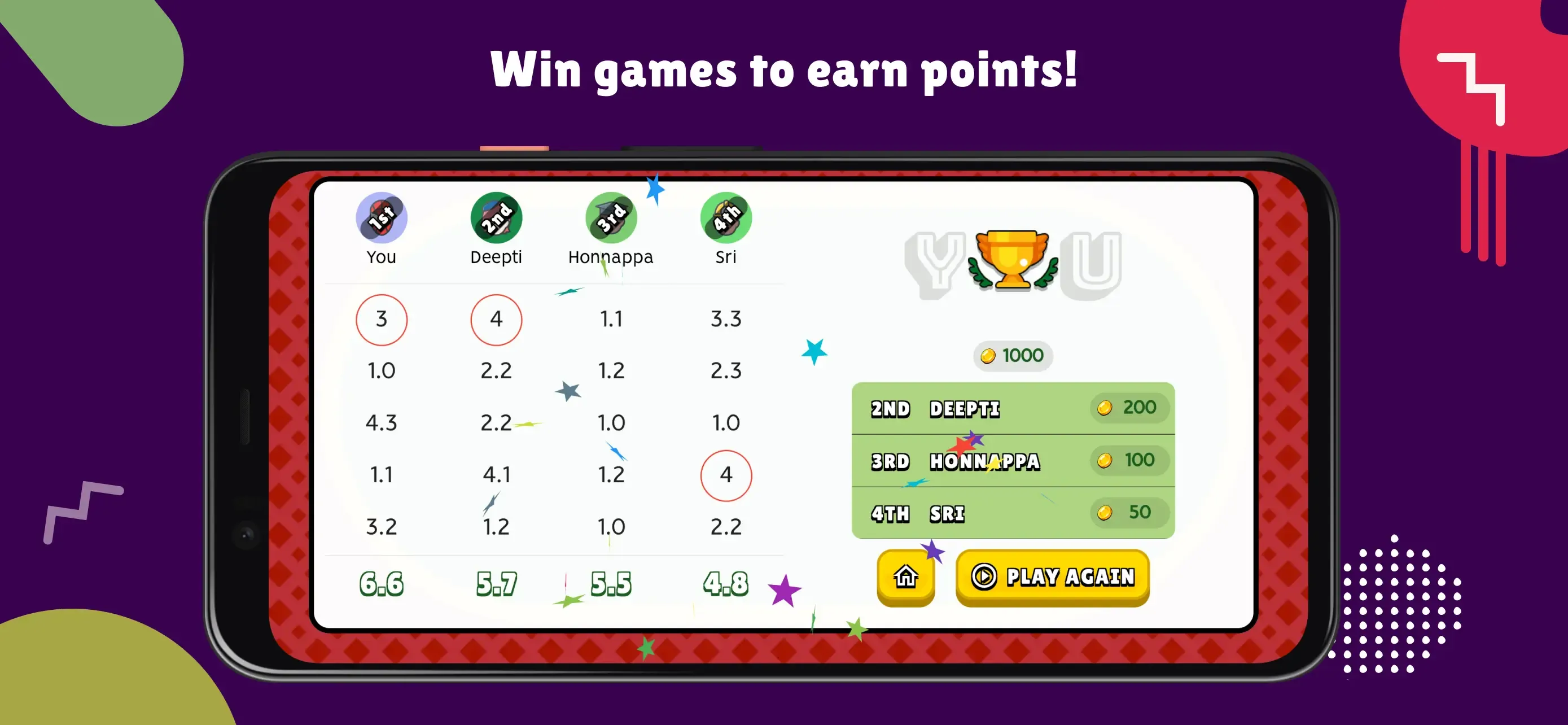

- Retention experiments informed by actual player behavior

Architecture decisions

The stack centered around Flutter on the client and Firebase on the backend. That was the right decision for a small team that needed to move quickly across Android devices with very different performance profiles.

Firebase gave us authentication, real-time data propagation, and an operational model that a small team could maintain. The tradeoff was cost and data-shape discipline. Real-time products can become surprisingly expensive if every screen is over-subscribed to updates, so I had to design carefully around read patterns and document structure.

Matchmaking and concurrency

One of the core systems was matchmaking. We needed to get players into games quickly without creating long waits or poor-quality lobbies. That required balancing queue speed with match reliability, especially during uneven traffic periods.

The challenge wasn’t only assigning four players to a room. It was handling the messy edges:

- players leaving mid-flow

- network interruptions

- duplicate join attempts

- stale room state

- rejoin after connection recovery

I built the room lifecycle around explicit state transitions so client logic stayed predictable. That was important because mobile multiplayer bugs often come from implicit assumptions about state.

Firebase scaling and cost control

As usage grew, Firebase cost discipline became a real engineering concern. I optimized reads and subscriptions aggressively, reduced unnecessary listeners, and shaped data to avoid expensive fan-out patterns.

That work mattered. At scale, small inefficiencies repeated across active rooms and sessions become business problems, not just technical ones.

Product experimentation

Because we owned the product end to end, we could test ideas quickly. We ran retention-oriented experiments around gameplay pacing, reward loops, and user friction points. Some changes were strictly technical. Others were product decisions enabled by fast engineering turnaround.

That feedback loop taught me a lot about building for real users instead of abstract “best practices.”

What I’d do differently

If I rebuilt the system today, I would isolate multiplayer session orchestration more explicitly from profile and progression data. We made good tradeoffs for a small fast-moving team, but stronger separation would have made later iteration easier and some operational debugging faster.

I would also invest earlier in internal analytics and simulation tooling. When you’re tuning real-time systems, a small amount of observability goes a very long way.